More than a dozen companies offer artificial-intelligence programs that promise to identify a person’s race, but researchers and even some vendors worry it will fuel discrimination

When Revlon Inc. wanted to know what lipstick women of different races and in different countries were wearing, the cosmetics giant didn’t need to send out a survey. It hired Miami-based Kairos Inc., which used a facial-analysis algorithm to scan Instagram photos.

Back then, in 2015, the ability to scan a person’s face and identify his or her race was still in its infancy. Today, more than a dozen companies offer some form of race or ethnicity detection, according to a review of websites, marketing literature, and interviews.

VIDEO: HOW A 30-TON ROBOT COULD HELP CROPS WITHSTAND CLIMATE CHANGE

In the last few years, companies have started using such race-detection software to understand how certain customers use their products, who looks at their ads, or what people of different racial groups like. Others use the tool to seek different racial features in stock photography collections, typically for ads, or insecurity, to help narrow down the search for someone in a database. In China, where face tracking is widespread, surveillance cameras have been equipped with race-scanning software to track ethnic minorities.

The field is still developing, and it is an open question of how companies, governments, and individuals will take advantage of such technology in the future. The use of the software is fraught, as researchers and companies have begun to recognize its potential to drive discrimination, posing challenges to widespread adoption.

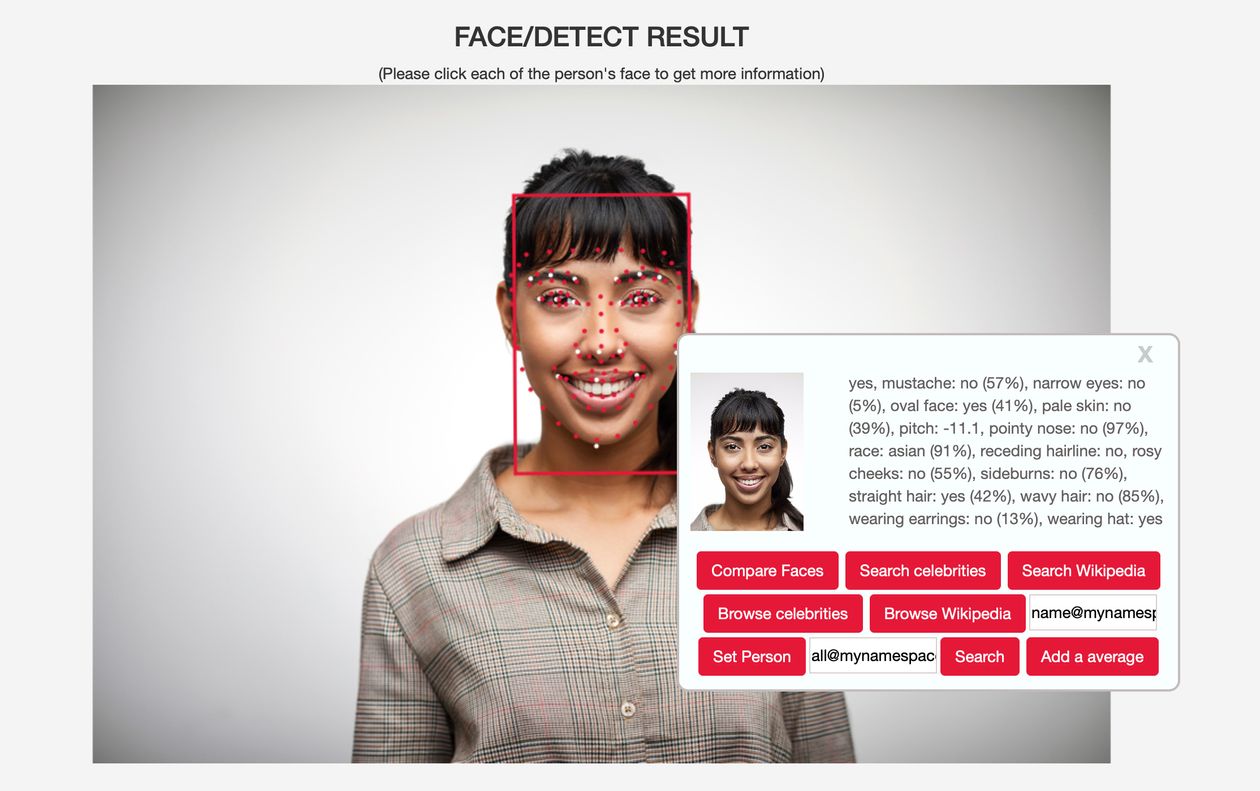

PHOTO: BETAFACE

A spokeswoman for Revlon says it was unable to comment because the Instagram scanning happened several years ago. Kairos didn’t respond to repeated requests for comment.

Race-detection software is a subset of facial analysis, a type of artificial intelligence that scans faces for a range of features—from the arch of an eyebrow to the shape of the cheekbones—and uses that information to draw conclusions about gender, age, race, emotions, even attractiveness. This is different from facial recognition, which also relies on an AI technique called machine learning, but is used to identify particular faces, for instance, to unlock a smartphone or spot a troublemaker in a crowd.

Facial analysis is “useful for marketers because people buy in cohorts and behave in cohorts,” says Brian Brackeen, a founder of Kairos who left in 2018 and now invests in startups through Lightship Capital.

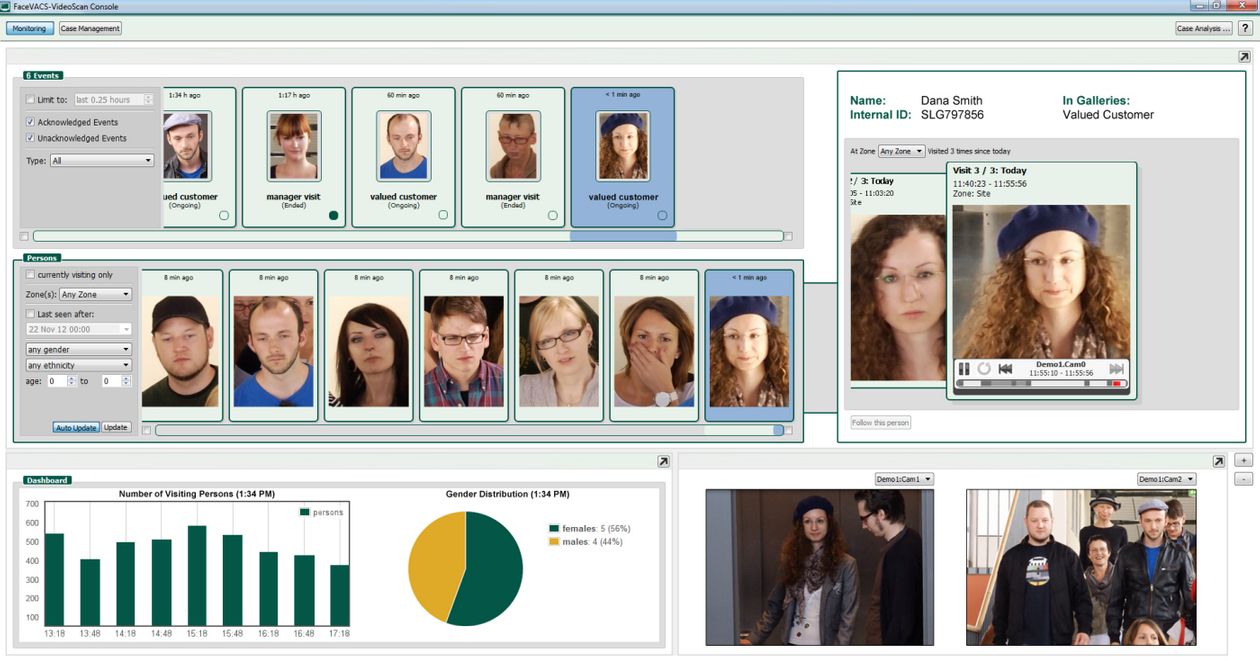

For retailers and other businesses, facial analysis of camera footage promises the ability to learn more about customers in brick-and-mortar settings, much like they have long used cookies, or small data files that track people’s internet activity, to target online ads. “The physical world is a big vacuum of data because there’s nothing there,” says Ajay Amlani, senior vice president of corporate development at Idemia SAS, a French firm that offers facial recognition and other identification services. Now cameras are starting to play a similar role to cookies.

New York-based Haystack AI Inc. says its customers use its race-classification feature for ad targeting, market research and to help authenticate people’s identities. Germany’s Cognitec Systems GmbH offers ethnicity detection for retailers and other companies to collect statistical data about their visitors, says Juergen Pampus, its sales, and marketing director. He said no customers had yet bought a license for race-classification, though they had bought software for classifying ages and genders.

PHOTO: COGNITEC SYSTEMS

Spectrico, a Bulgarian startup that sells a perpetual, unlimited license for its race-classification tool for 1,000 euros (about $1,170), says that dating sites use it to check if profiles are accurate, while advertisers use it to track the demographics of people looking at smart billboards. Founder Martin Prenev said a few customers have purchased the race-classification tool, and more bought it in combination with a gender- and age-classification tool.

China’s Face++, one of the world’s biggest facial-recognition and facial-analysis companies, was valued at $4 billion in May 2019, according to market intelligence firm PitchBook. It says on its website that its race-detection feature can be used for consumer-behavior analysis and ad targeting.

Facial analysis has largely flown under the radar, even as facial recognition has come under fire because poorly trained systems have misidentified people of color. Places such as Boston, San Francisco, Washington state and California have curbed the use of facial recognition in law enforcement. IBM Inc., Alphabet Inc., and Microsoft Corp. have limited their facial-recognition businesses, particularly in selling to police departments.

Research into facial analysis continues. Some scientists say that algorithms trained to identify a person’s race can be startlingly accurate. In May, two scientists from Ruhr-Universitat Bochum in Germany published a paper in the scientific journal Machine Learning showing that their algorithm could estimate if a face was white, Black, Asian, Hispanic, or “other” with 99% accuracy. The researchers trained their algorithms on a database of prison mug shots that included a label for the race of each person, according to Dr. Laurenz Wiskott, one of the study’s authors.

Some researchers and even vendors say race-based facial analysis should not exist. For decades, governments have barred doctors, banks, and employers from using information about race to decide which patients to treat, which borrowers to grant mortgages and which job applicants to hire. Race-detection software poses the disconcerting possibility that institutions could—intentionally or not—make decisions based on a person’s ethnic background, in ways that are harder to detect because they occur as complex or opaque algorithms, according to researchers in the field.

PHOTO: HANNAH WHITAKER FOR THE WALL STREET JOURNAL

Using AI to identify ethnicity “seems more likely to harm than help,” says Arvind Narayanan, a computer science professor at Princeton University who has researched how anonymized data can be used to identify people.

A person of the mixed-race might disagree with how an algorithm classifies them, says Carly Kind, director of the Ada Lovelace Institute in London, a research group that focuses on applications of AI. “Technological systems have the power to turn things into data, into facts, and seemingly objective conclusions,” she says.

Software that lets employers scan the micro-expressions of job candidates during interviews could inadvertently discriminate if it starts to factor in the race, Ms. Kind says. Police who classify ethnicity using facial-analysis software run the risk of exacerbating racial profiling, she says.

Ethnicity recognition could also be harmful if companies use it to push marginalized people toward specific products or offer discriminatory pricing, says Evan Greer, deputy director of digital rights group Fight for the Future.

The use of face-scanning technology in China has been controversial, most notably in Xinjiang, a region in the country’s northwest where authorities have used it to surveil its Uighur Muslim minority. Last year, a Chinese surveillance camera maker, Hangzhou Hikvision Digital Technology, advertised a camera on its website that could automatically identify Uighurs, according to security-industry trade publication IPVM.

PHOTO: GREG BAKER/AGENCE FRANCE-PRESSE/GETTY IMAGES

Mr. Brackeen, who is Black, championed Kairos’s race-recognition system because it could help businesses tailor their marketing to ethnic minorities. Now, Mr. Brackeen believes that race-detection software has the potential to fuel discrimination.

He is comfortable with businesses using it but says governments should not use it and is wary of consumers using it too. Kairos released a free app in 2017 that estimated race, showing how Black, white, Asian or Hispanic people were if they uploaded a selfie. “I hoped that people would see that 10% Black score and see the humanity in other people,” Mr. Brackeen says.

More than 10 million people submitted selfies to the app to see the results. Many of the app’s users, particularly in Brazil, complained of social media that their scores weren’t white enough, Mr. Brackeen says. The app was shut down.

In Northern Europe, a furniture chain used the facial-analysis software of Dutch facial-recognition firm Sightcorp B.V. to screen customers entering its stores, and learned that many of them were younger than it had expected. It subsequently hired more people in their 20s and 30s to be floor staff, according to Joyce Caradona, chief executive of Sightcorp. Her company stopped selling its own ethnicity classification feature in 2017, on concerns it might contravene Europe’s data-privacy laws.

Facewatch Ltd., a British facial-recognition firm whose software spots suspected thieves as they enter a store by screening them against a watch list, earlier this year removed an option to track the race, gender or age of shoppers, since “this information is irrelevant,” a spokesman says.

WSJ / Balkantimes.press

Napomena o autorskim pravima: Dozvoljeno preuzimanje sadržaja isključivo uz navođenje linka prema stranici našeg portala sa koje je sadržaj preuzet. Stavovi izraženi u ovom tekstu autorovi su i ne odražavaju nužno uredničku politiku The Balkantimes Press.

Copyright Notice: It is allowed to download the content only by providing a link to the page of our portal from which the content was downloaded. The views expressed in this text are those of the authors and do not necessarily reflect the editorial policies of The Balkantimes Press.